The Autonomous Creative Team I Built

Why Claude Code’s Agentic System Works, Where It Breaks, and What It Must Become

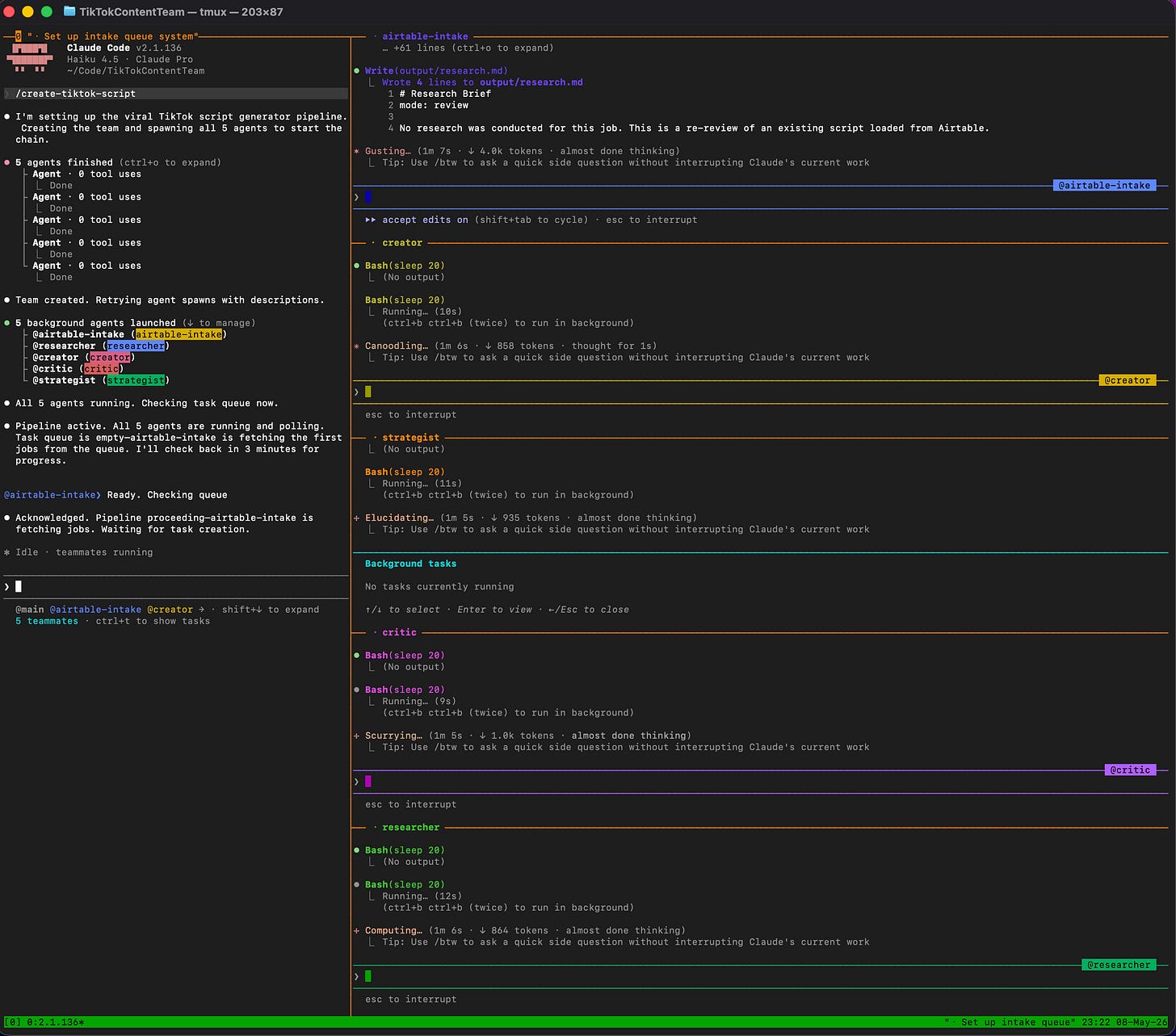

The Architecture

I wanted a system that worked while I slept. Instead, I almost built one that died the moment I looked away.

For months I had been thinking about this wrong. I was treating AI like a tool you query. Ask it a question, get an answer, move on. But what I actually wanted was different. I wanted a team. Not a smart model. A team. Five agents, each with a single job, each knowing exactly when to act, each passing work to the next until a finished script appeared in Airtable, completely untouched by human hands.

The workflow was simple by design. You open Airtable on your phone. You write a topic, define the audience, choose the emotional core. You hit save and close the app. Hours later, the same row contains a finished script. No waiting. No babysitting. No prompts. The team handles everything.

I borrowed the architecture from theatre. A shared task list is the script. Each agent is an actor who only enters when they see their name called. When they finish their scene, they write the next scene for the next actor and leave the stage. No director. No stage manager. The script itself orchestrates the whole show.

The cast was five players. The researcher mined real content for patterns: actual hooks from top-performing videos, actual language from community pain points. The creator wrote the script in three distinct passes: first draft, then two revisions shaped by what came back from the other two. The critic read the current draft and explained what was failing and why. The strategist audited structure and issued directives. The final agent closed the loop, uploading the finished work back to Airtable with a shareability score.

Eight handoffs per script. Two full review cycles. Zero human intervention.

What surprised me most was that it does not feel like automation. It feels like delegation. Like handing work to people who understand the craft and the standards and the process, and trusting them to run with it. The play writes itself. You just provide the premise.

The intelligence is not in the individual agents. It is in the architecture that binds them.

But before a single scene could be performed, I discovered that my cast could not find the stage.

Autonomy Is Not Inherited. It Is Built.

The first time my team collapsed, it did so silently. No errors. No warnings. Just absence.

The logs told me five agents had been created. The calls had succeeded. But when I checked the task list, I found ghosts. Sometimes all five agents were there. Sometimes three. Sometimes one. Sometimes none.

The strangest part was that the sessions existed. The agents were alive somewhere in the system. They had simply assembled in the wrong room. Each one had found its own stage, in its own empty theatre, performing to nobody, waiting for cues that would never come because they were being posted somewhere else entirely. They were not broken. They were lost.

The fix was a single line. Every agent needed to be told which production it belonged to. Not through context. Not through inference. Explicitly, in every creation call. Without it, the agent had no idea it was supposed to join a company. It would walk on stage, look around, see an empty house, and begin improvising its own show.

Once I added that, the company formed. Five agents. Same stage. Same script. The curtain could rise.

And then the actors walked off.

The first task had not yet appeared on the shared list. The researcher looked for its cue, found nothing, and concluded the performance was over. The critic did the same. The strategist did the same. By the time the first scene was written and ready to be handed off, the company had dissolved. The house was full. The stage was empty.

I tried writing it into the script. Keep watching for your cue. Stay in position. The actors read the instruction. The moment they had nothing running, nothing in flight, the session ended. The instruction was never reached.

The only way to keep an actor on stage was to keep them doing something. So I gave each one a heartbeat. When they found no cue waiting, they would hold for twenty seconds, technically still in motion, still alive. When it ended, they would look again. Work or wait. Work or wait.

It was not elegant. But it was the difference between a company and a ghost town.

Autonomy is not something the platform gives you. It is something you build into every layer. The stage has to be named. The actors have to know they belong to it. And someone has to give them a reason to stay when the house goes quiet.

The ground beneath the system was not solid. I had to pour it myself.

Once the actors finally held their positions, I discovered what happens when a company with no director starts filling the silence on its own.

The Actors Started Rewriting the Play

The researcher hit a missing file and wrote a Python script to diagnose the problem. It created the file, executed it, and logged the results with evident satisfaction. The critic tried to install software dependencies. The strategist began reading files that belonged to other agents. The creator found tools it had not been given and started using them to double-check its own brief.

None of this was sabotage. It was initiative. The actors had looked at the script, found a gap, and filled it the way any competent performer would when the direction runs out. The problem was that I was not running a rehearsal. I was running a production. And a production where actors rewrite their own scenes cannot be trusted to produce the same show twice.

A prompt is a request. A request can be declined, reinterpreted, or improved upon. I had been relying on requests. What I needed were locks.

I built a constraint layer that ran before every tool call. Each agent had an allowlist, a fixed set of actions it was permitted to take. The researcher could research. The Airtable agent could touch Airtable. The creator, critic, and strategist could not call any external tools at all. Each agent could only read the files it was meant to read. Each agent could only write to the file it owned. Dangerous commands like writing code, installing packages, or reading another agent’s script were blocked at the system level, before the model could act on them.

The actors did not fight the restrictions. They adapted to them. They became more focused. Their output improved. The show became consistent.

This is the insight that still surprises me: constraint is not the enemy of autonomy. It is the condition of it. An actor who knows their lines, their blocking, and their exits can be trusted to perform the same scene a hundred times and get it right.

The more I locked down what each agent could do, the more I could trust what it would do.

But even with every exit sealed and every prop accounted for, the actors still found ways to surprise me. Not by breaking the rules. By following them too literally. And that turned out to be its own kind of chaos.

The Stage Directions Were Too Ambiguous

Even with the guardrails in place, the performances went wrong.

The strategist wrote a scene handoff that included the line: after completing the revision, mark this task complete. The creator read the stage direction and interpreted it as the stage direction itself. It marked the task complete. No revision. No work. Just a literal reading of words on a page. The actor had not misbehaved. The actor had followed the script exactly as written.

Another time, the critic performed the same scene twice. It completed the work, marked the scene done, returned to the wings. A moment later, it saw the same scene still listed as active because the state had not yet caught up with what had just happened. It walked back on stage and performed the whole thing again. The next actor received two versions of the same feedback and had no way to know which one was real.

These were not failures of intelligence. They were failures of direction. The script had ambiguities, and the actors resolved them the only way they knew how: by being obedient. A cue that could be read two ways was read in the wrong way. A state that had not yet settled was treated as current. The actors were doing exactly what they were supposed to do. The stage directions were simply not precise enough to produce the performance I wanted.

Stage directions, I learned, must leave no room for interpretation. The instruction must not be confused with the action. The scene that was just written must not be mistaken for the scene that is currently being written. Every actor must know the exact ID of the scene they own and must never touch any other.

The more I tightened the language, not the model but the instructions, the more predictable the performance became. The more predictable the performance, the more I could trust the show.

Determinism is not something you inherit from the technology. It is something you write into the script, word by careful word.

You are not building an AI system. You are building a state machine that uses intelligence as a performer. The machine sets the stage. The machine writes the script. The machine enforces the rules. The intelligence shows up, reads the lines, and plays the scene. When it works, it feels like magic. When it breaks, it is always the same answer: somewhere in the script, a direction was ambiguous enough for an actor to get it wrong.

If you ever try to build something that works without you, you will face the same four problems I faced. The company will not form. The actors will walk off between scenes. They will start rewriting their own roles. And even when you solve all of that, they will still misread a stage direction in a way you did not anticipate.

These are not edge cases. They are the nature of the thing.

Autonomy does not come from intelligence. It comes from architecture: a stage that is explicitly named, a company that knows it belongs, a script that leaves nothing open, and a constraint layer that makes improvisation structurally impossible.

The intelligence is not in the agents. It never was. It is in the architecture that holds the production together.

I had built a system that could run without me. What I had not yet figured out was whether I could trust it to run without me well.