The Last Interface. And Everyone Is Fighting for It Right Now.

Search monetized the gap between a question and an answer. Asking collapses that gap. Every business model built on the gap is now structurally threatened — and the companies that built them know it.

There is a phrase people have started using without thinking about it. They say it casually, almost offhand, the way you describe a behavior that already feels like the obvious thing to do. The phrase is “Ask AI.”

Not “search for it.” Not “look it up.” Not “open the app.” Ask.

The significance is not in the words. It is in what the words reveal about where intention now lives. People are not shaping their desire to fit the grammar of a machine. They are expressing desire directly, in the language of thought, and expecting the system to handle the translation. This is not a feature. It is not a trend. It is the earliest behavioral signal of a structural shift that has happened four times before in the history of computing — and every time it happened, the companies that failed to see it spent a decade explaining why they lost.

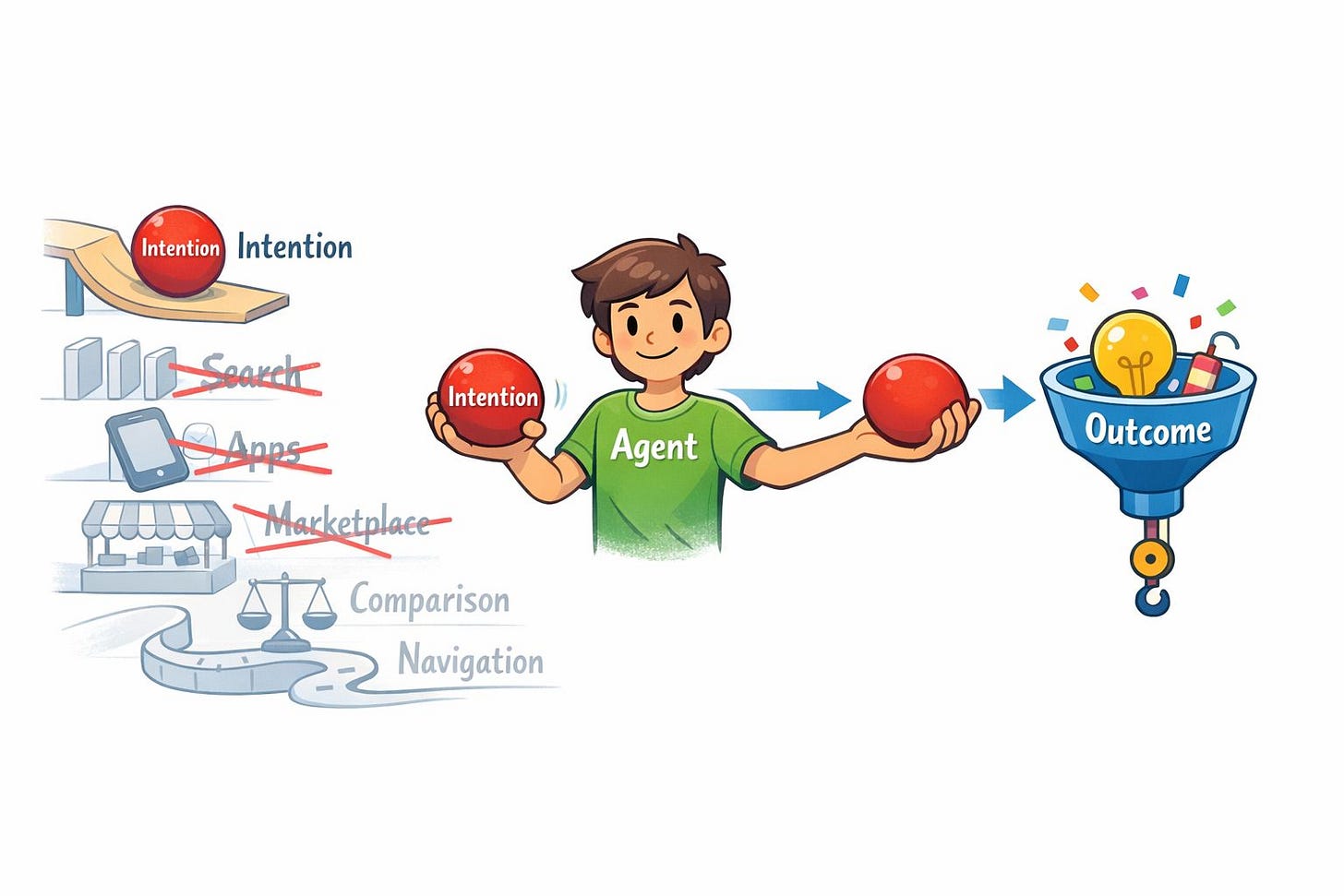

The dominant wrong interpretation is that AI assistants are a better search engine. A faster way to navigate. A smarter autocomplete. This interpretation is structurally incorrect, and the companies that believe it are already making the strategic errors that will define the next decade. What is actually happening is not an improvement to the existing intent layer. It is the replacement of it.

This essay is about that replacement, the mechanism behind it, and who wins.

The Interface Always Wins

Every major shift in computing is, at its root, a shift in how humans express intention. Not a shift in processing power. Not a shift in connectivity. A shift in the interface through which desire enters the machine. And the pattern is consistent enough to be called a law: the interface that compresses the most friction always becomes the new default. The interface that becomes the new default captures the intent layer. The company that captures the intent layer controls the architecture of everything beneath it.

The command line captured intention through syntax. You had to learn the grammar of the system to use it. When the graphical interface arrived, commercialized by Apple with the Macintosh in January 1984, the grammar disappeared. Intention became spatial. The translation burden moved from the human to the machine. Microsoft won the GUI era not because Windows was better than the Macintosh, but because it understood that the environment where intention was expressed was more valuable than the hardware running beneath it. Hardware became a commodity. The OS became the control point.

Google repeated the pattern in search. The browser delivered users to the search box. The search box delivered users to the results page. The results page was where the money lived. Google built more than $175 billion in annual search advertising revenue on a single insight: the most valuable moment in the digital economy is the moment before action. The moment a user reveals what they want. Google did not try to eliminate the gap between intention and outcome. It monetized the gap. Every result page was a surface. Every click was a transaction. Every comparison a user performed was an opportunity to influence the decision.

Apple repeated the pattern in mobile. The iPhone, launched in January 2007, was not a better phone. It was a new intent layer. It sat between the user and every other digital system. The App Store, launched in July 2008, converted proximity to intention into a tax on every desire expressed through the device. Two billion active iPhones today are not a market share statistic. They are two billion daily expressions of intention that route through Apple’s infrastructure.

The pattern has now repeated four times in forty years. A more efficient interface appears. Users migrate because efficiency is destiny. The new control point captures the intent layer. Everything built on the old intent layer becomes infrastructure or becomes irrelevant.

The fifth iteration has begun. And this one is different from the previous four in one critical way: there is no interface above intention. The agent is not a stepping stone to something more efficient. It is the compression limit. Language is the most direct path between a thought and a machine. Once that path is open, there is nowhere left to compress.

The Collapse of the Gap

Search monetized friction. Asking collapses it.

This is not a subtle distinction. It is the entire structural argument. When a user asks an agent for a recommendation, they do not open a browser. They do not navigate to a results page. They do not compare ten options. They do not click a sponsored listing. They ask. The agent interprets. The agent decides. The user receives an outcome.

The gap disappears. And every business model built on the gap disappears with it.

The numbers make the threat concrete. Google’s search advertising revenue was $175 billion in 2023, representing more than 55% of Alphabet’s total revenue. That revenue depends on users navigating through results. A 2024 study by SparkToro estimated that approximately 60% of Google searches already end without a click, a number that has risen consistently as Google surfaces more answers directly. AI Overviews, launched in Google Search in May 2024 and initially rolled out to over one billion users, accelerated this pattern. Users get answers without clicking. The surface shrinks. The gap narrows. The revenue model strains.

This is not a Google problem. It is the same structural pressure applied to every business that monetized a step in the funnel. The App Store generated $89 billion in revenue in 2022 by sitting between desire and the tool required to fulfill it. An agent that fulfills desires directly, without routing through an app store, is not a competing product. It is a bypass. Amazon’s marketplace generated $35 billion in advertising revenue in 2023 by monetizing the comparison step. Every sponsored listing was valuable because users had to compare. An agent that compares on the user’s behalf and places the order directly does not compete with the marketplace. It eliminates the surface the marketplace monetized.

The dominant wrong narrative says these companies will integrate AI and preserve their positions. Some will adapt. But integration is not the same as control. A company that integrates an AI layer it does not own has added a dependency, not a moat. The question is not whether the incumbent integrates AI. The question is whether the intent layer lives inside the incumbent’s walls after the integration is complete.

The gap was the business. The agent closes the gap.

The Agent as Aggregator

To understand why the agent becomes the new dominant architecture, you need aggregation theory.

Aggregators do not create supply. They aggregate demand. They sit between users and suppliers, and because they control access to users, suppliers must come to them. Google does not produce content. It aggregates access to content, and because every publisher needs Google’s users, publishers must optimize for Google’s algorithm. Apple does not build most of the apps on the iPhone. It aggregates access to iPhone users, and because developers need those users, developers must pay Apple’s 30% commission and follow Apple’s rules. Amazon does not manufacture most of what it sells. It aggregates purchasing intent, and because sellers need Amazon’s buyers, sellers must compete on Amazon’s terms.

The critical property of aggregation is that it is self-reinforcing. More users attract more suppliers. More suppliers attract more users. The aggregator sits in the middle and extracts value from every transaction. Once this loop closes, the aggregator becomes structurally dominant. It does not need to be the best at any single thing. It needs to be the default path between users and what users want.

The agent is the next aggregator. And it aggregates at a layer no prior aggregator has reached.

Search aggregated navigational intent. Users with a question came to Google. Google routed them to publishers. Google extracted value from the routing. But the user still had to navigate after the routing. The gap remained. The agent eliminates the routing step entirely. It does not send the user to a supplier. It retrieves, evaluates, and delivers the outcome directly. The supplier is no longer a destination. The supplier is a capability the agent calls.

This shift in the supply relationship is the mechanism that makes the agent structurally different from every prior aggregator. When Google aggregated publishers, publishers could still maintain direct relationships with users. A user who trusted a specific publication could navigate directly to it, bypassing Google. When Apple aggregated developers, users could still discover apps through word of mouth and brand recognition. The App Store was dominant but not total. When an agent aggregates outcomes, the supplier becomes invisible by design. The user does not know which API was called, which database was queried, which service fulfilled the request. The agent is the interface. The supplier is infrastructure.

This is the Aggregation Theory endpoint: a system that aggregates intention itself, at the level of language, before any other system is aware the intention exists.

The named mechanism is The Intent Collapse. The causal chain is exact: a user expresses intention in language; the agent captures the intention before any other system is invoked; suppliers become capabilities the agent calls rather than destinations users navigate to; capabilities become interchangeable because the user never sees them; the agent becomes the aggregator; the aggregator becomes the control point; everything else becomes infrastructure. Each step in this chain follows from the previous one with the same structural logic that made Google dominant over publishers and Apple dominant over developers. The difference is that no prior aggregator captured intention before the user had expressed it through another interface. The agent captures intention at the moment of thought.

Once the intent layer moves into the agent, every supplier in the digital economy faces the same choice: become legible to the agent or become invisible to the users the agent serves.

How Each Business Model Breaks

The five companies with the most to lose are not responding to the same threat. They are each responding to the specific way the agent collapses their particular business model. Understanding the pressure on each one explains the moves they are making.

Google. Business model: gap monetization. Revenue of $175 billion in search advertising in 2023 exists because users navigate through results — clicking, comparing, deciding. Each step is a monetizable surface. Threat: AI Overviews, launched May 2024, answers questions without producing clicks. The SparkToro study found 60% of searches already end without a click, a number rising before generative AI accelerated it further. Every answered question without a click is a withdrawal from the account that funds everything else. Forced move: integrate Gemini across Search, Chrome, Android, Gmail, and Maps to keep the intent layer inside Google’s walls. Strategic consequence: Google is choosing controlled cannibalization over uncontrolled displacement. The risk is that the cannibalization rate exceeds the revenue it protects.

Apple. Business model: device proximity. The App Store’s 30% commission on $89 billion in 2022 revenue existed because Apple controlled distribution to the surface closest to the user’s intention. Threat: if the agent is not a native layer of the device, it becomes a third-party service that routes around Apple’s infrastructure. The threat is not a better agent. It is a better agent outside Apple’s architecture. Forced move: Apple Intelligence, announced WWDC June 2024, embeds the agent into the keyboard, lock screen, and every input surface on the device. Strategic consequence: Apple converts device proximity into agent proximity. The risk is that the agent requires capabilities Apple cannot build natively, forcing dependence on OpenAI or other external models Apple does not control.

Microsoft. Business model: enterprise bundling. Windows, Office, Teams, and Azure form interlocking dependencies that make switching costly for enterprise customers. Threat: the agent could become a commodity layer any vendor can drop into the enterprise workflow, breaking the bundle’s differentiation. Forced move: Copilot launched in Microsoft 365 in November 2023 and in Windows in September 2023, embedding the agent inside the existing bundle before competitors can establish an independent beachhead. Strategic consequence: Microsoft converts its distribution advantage into the container for the new intent layer. The risk is that Copilot’s performance in enterprise workflows does not justify the premium Microsoft needs to defend its pricing.

Meta. Business model: conversational surface monetization. WhatsApp’s 2 billion monthly active users, Instagram’s 2 billion, and Messenger’s 1 billion exist because people express desire in conversational form. Asking is the native interface of conversation. Threat: an agent that lives outside Meta’s surfaces captures the conversational intent that currently flows through Meta’s platforms. Forced move: Meta AI launched across all major Meta platforms in September 2024, embedding the agent into the surfaces before users develop habits with external agents. Strategic consequence: Meta’s distribution advantage converts directly into agent distribution. The risk is that Meta’s advertising model and agent utility are structurally in tension — an agent that genuinely serves user intention does not also serve advertiser intention.

Amazon. Business model: comparison monetization. The $35 billion in advertising revenue in 2023 depends on users performing the comparison step inside Amazon’s interface. Sponsored listings are valuable because users must decide. Threat: an agent that performs the comparison on behalf of the user and routes the purchase to the optimal fulfillment option eliminates the comparison step entirely. Forced move: Amazon’s investment in Anthropic, announced September 2023 with a commitment of up to $4 billion, is a positioning bet. Amazon is trying to become the execution layer the agent calls rather than the interface the agent replaces. Strategic consequence: if Amazon succeeds, its fulfillment network becomes the infrastructure the agent relies on. The risk is that a capable agent treats Amazon’s fulfillment as one option among many, stripping the premium Amazon captures by controlling both discovery and delivery.

Each of these companies is making the same underlying calculation: the agent is coming regardless, and the only question is whether they control the surface it runs on or become a commodity supplier to the company that does.

What This Means, For the People Who Build What Comes Next

For founders building on AI: the distribution question has changed structurally. In the search era, distribution meant ranking. In the mobile era, it meant the App Store. In the agent era, it means being the capability the agent invokes. If your product requires a user to navigate to it directly, your distribution model is threatened not by a competitor but by the interface layer itself. The question is not how to acquire users through old channels. The question is how to become the service the agent calls when a user expresses the desire your product was built to fulfill. The founders who answer this in 2025 will define their categories. The founders who answer it in 2027 will be paying for placement inside someone else’s agent.

For engineering leaders and CTOs: the interface your users interact with is no longer fully under your control. An agent sitting above your product can intercept intention before it reaches you, reframe the request, and route it to a competitor the agent trusts more or has been trained to prefer. The defensive architecture question is not how to build a better UI. It is how to ensure your product is legible to agents that will mediate access to it. APIs are not enough. You need structured capability descriptions that agents can discover, evaluate, and invoke with confidence. If your product cannot be understood by an agent in the time it takes to process a query, your product becomes invisible to the users who rely on agents to decide for them.

For investors: the intent layer is the most valuable layer in the stack and it is actively contested right now. Model benchmarks are the wrong signal. The right signal is surface control: which company owns the interface where intention is expressed for the users that matter to your portfolio. A model that scores highest on every benchmark but routes through Google’s infrastructure is Google’s agent. The question for every AI investment is not capability. It is whether the company controls a surface where intention lives, or whether it is a supplier to a company that does. The moat in this era will not be built from model quality, which is commoditizing rapidly. DeepSeek replicated frontier-grade performance in early 2025 for a reported $5 million in compute. The moat will be built from memory: the accumulated record of preferences, routines, and history that makes the agent impossible to replace without losing the continuity of your digital life.

The last interface does not ask for your attention. It accepts your intention directly.

There is no interface above intention.

The structural argument: Every major interface shift in computing is a shift in the control point where human intention enters the machine. The interface that compresses the most friction always wins, and the company that controls that interface controls the architecture of everything below it. Search monetized the gap between a question and an answer, building $175 billion in annual revenue for Google on navigational friction alone. AI agents collapse that gap entirely: users express desire in language, the agent interprets and executes, and every monetizable step in the funnel disappears. This is the mechanism of aggregation applied to intention itself — the Intent Collapse — where every system that previously sat between thought and outcome becomes a commodity supplier to the aggregator that owns the interface. Google, Apple, Microsoft, Meta, and Amazon are each responding to the specific way the agent destroys their particular business model: gap monetization, device proximity, enterprise bundling, conversational surface, and comparison monetization respectively. The moat in this war is not model quality, which is commoditizing, but memory — the accumulated record that makes the agent irreplaceable. There is no interface above intention. The company that captures it wins the next decade.

Shifting from searching to simply asking could change who controls how people find and use information.

Great insights! These are good arguments, though I am missing the "trust" dimension. These incumbent interface owners earned trust. A large part of trust is built through consistency, which generative AI lacks.