The Missing Layer: Why AI Can’t Build Systems That Last

How a 350,000‑Line System Revealed AI’s Hidden Architectural Flaw

The Edge of the Map

Every system has a boundary, though most people never reach it. They operate comfortably inside the illuminated zone, where abstractions hold, where tools behave predictably, where the architecture feels stable enough to trust. But if you build long enough and deep enough, you eventually run out of map. You step past the edge of what the system was designed to support, and the underlying physics reveal themselves. It is not a dramatic moment. It is a quiet one: the point where the system stops pretending and begins telling the truth.

I reached that point not because I was chasing it, but because the work pushed me there. Over the last year I built a full production platform alone: hundreds of thousands of lines of code, dozens of services, a complete CI/CD pipeline, strict validation and sanitization, multi-locale UI, event-driven flows, Terraform-managed infrastructure, and a test suite with thousands of assertions. Not a prototype. Not a demo. A real system, the kind that normally requires a team, built with AI embedded directly into the engineering workflow inside a tightly controlled process: surgical-change rules, strict invariants, static analysis enforcement, sanitization funnels, isolated indices, namespaced caches, a TDD roadmap that leaves no ambiguity.

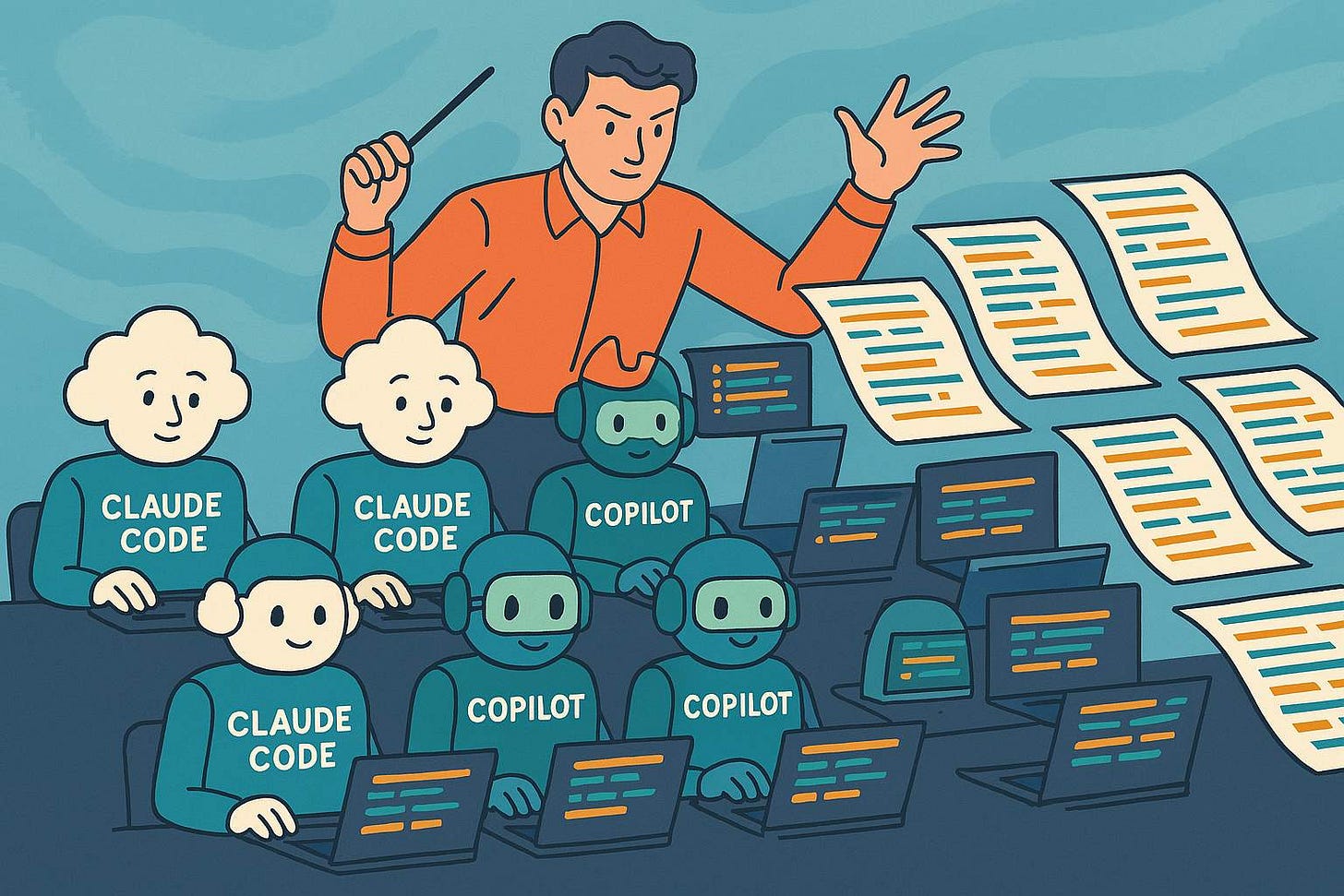

Inside that environment, the AI behaved like a brilliant engineer with amnesia. It could execute a step perfectly, then forget the architectural decision that made the step valid. It could fix a bug in one file while silently breaking invariants in others. It reinvented logic that already existed. It forgot constraints that were explicitly documented. It broke contracts enforced by tests. I was not debugging code. I was debugging the model’s memory.

This is not an isolated experience. Cognition’s Devin, launched in March 2024 as the first “fully autonomous software engineer,” showed 13.86% success on SWE-bench, the benchmark for real-world GitHub issues. Independent testing by Answer.AI in January 2025 found it completed 3 of 20 real tasks. Not because the model was weak. Because the tasks required holding architectural context across multiple files, multiple sessions, and multiple decisions, and the substrate had no mechanism to maintain that context. GitHub Copilot Workspace shows the same failure mode at scale: impressive in isolation, brittle in context.

If a system as deterministic as mine, this disciplined, this constrained, this architecturally fortified, still collapses under AI drift, then every team building with AI today is sitting on the same fault line. They just have not scaled far enough to feel it yet. The failure is not in the code. It is in the substrate. And the substrate is the same for everyone.

I wrote about the engineering process behind this system in detail here, the constraints, the invariants, the architectural decisions that made it possible to build at this scale alone. This essay is about what that process revealed.

The Foundation Built on Prediction

Every technological wave begins with a convenient illusion. In the early cloud era, the illusion was that servers had disappeared. In the mobile era, that apps were small. In the social era, that networks grew by themselves. In the AI era, the illusion is that intelligence is enough: that if a model can reason, generate, and follow instructions, the rest will simply emerge.

Illusions collapse the moment you try to build something real on top of them. Not a demo. Not a prototype. A system with constraints, compliance, scale, and consequences.

LLMs were built to predict the next token. Everything else, reasoning, planning, tool use, multi-step workflows, is emergent behavior layered on top of a statistical engine with no concept of time, boundaries, or consequences. It can simulate understanding but it cannot maintain it. It can generate structure but it cannot preserve it. It can follow instructions but it cannot remember why those instructions matter. The industry keeps treating this as a temporary inconvenience that bigger models or longer windows will solve. But scale does not change the nature of the substrate. A larger prediction engine is still a prediction engine.

This is why the current AI stack collapses under real load. Not because the models are weak, but because the architecture around them is missing. The ecosystem is trying to build long-lived systems on top of stateless engines that forget everything the moment the buffer resets. And the more complex the system becomes, the more sharply the flaw asserts itself.

The industry’s response has been predictable: more agents, more context, more orchestration, more clever hacks. LangChain, AutoGPT, CrewAI, and a dozen successors have each promised to solve coordination through layering, more wrappers, more memory tricks, more retrieval. Each has collapsed under production load for the same reason. They increase the surface area of failure. They multiply the points of drift. They deepen the instability while creating the illusion of progress. They are attempts to build skyscrapers by adding scaffolding instead of pouring a foundation.

The deeper problem is that the industry is reaching for the wrong analogy. Engineers use ADRs, documentation, and invariant testing to maintain coherence in large systems. But these tools work only because humans maintain a persistent mental model of the system. They constrain people, not models. An LLM cannot internalize an ADR. It cannot carry architectural decisions forward in time. It cannot enforce invariants unless something outside the model enforces them. Human engineering practice assumes a world model in the engineer’s head. AI has no such world model.

That is the gap. And it is structural.

The Missing Layer

Once you understand that the entire AI ecosystem is built on prediction rather than architecture, the question becomes precise: what is the layer that would turn intelligence into engineering, reasoning into systems, and generation into continuity?

The answer is not speculative. It is the same layer every other computing revolution required before it could scale beyond demos and into infrastructure: persistent, enforceable, system-level memory.

Not memory as a buffer. Not memory as retrieval. Not memory as a clever way to stuff more tokens into a window. Architectural memory: the kind that survives time, scale, and change. The kind that stores decisions rather than text. The kind that enforces constraints rather than patterns. The kind that allows a system to evolve without dissolving into inconsistency.

Every real platform in computing history has had this layer. Operating systems have it: the process model defines what any program can do and where. Compilers have it: the type system enforces contracts across the entire codebase at build time. Databases have it: the schema and transaction model enforce consistency across every write. AWS IAM has it: permissions are explicit by default, never assumed. The App Store sandbox has it: applications operate inside defined permission scopes regardless of what they request. In each case, the layer does not instruct the system what to do. It defines what the system is allowed to do. The distinction is not cosmetic. It is the difference between a tool and an architecture.

AI has no such layer. And without it, everything built on top of AI inherits the instability of the substrate.

What would it look like? Not a wrapper. Not a plugin. Not a prompt. A substrate: a persistent, queryable, updatable architectural graph that the model reads from, writes to, and is constrained by. A system that stores architectural decisions, invariants, domain rules, interfaces, dependencies, test expectations, and refactor boundaries. A system that validates every AI action against that memory. A system that rejects changes that violate constraints. A system that forces the model to operate inside a structure it cannot drift away from because the structure is enforced at a layer beneath it.

This is not a nice-to-have. It is the only way AI can behave like an engineer rather than a generator. Engineers do not rebuild the world from scratch every time they open a file. They operate inside a persistent mental model of the system, a model that constrains their decisions as much as it informs them. Without that model, engineering collapses into improvisation. And improvisation does not build systems.

The missing layer is the difference between a prediction engine and a platform.

Why No One Has Built It

Once you understand the missing layer, you also understand why it does not exist. It is not because the idea is obscure. It is because the incentives of the current ecosystem make it structurally impossible for the people building AI products to build the foundation those products require.

The missing layer is infrastructure: deep, architectural infrastructure. And infrastructure is the one thing the modern startup ecosystem is optimized to avoid. Startups are built around speed, not structure. Around demos, not systems. Around fundraising narratives, not architectural integrity. The incubator logic is: ship an MVP, prove demand, fix the foundation later. But the missing layer cannot be added later. It is the foundation. Foundations are not something you retrofit once the building is already standing.

This is why every attempt to build “AI engineering tools” ends up as a thin wrapper around someone else’s model. The economics force it. Investors want traction. Founders want runway. Teams want to ship. No one wants to spend a year building a memory substrate that produces no demo, no viral moment, no immediate revenue. Yet that is exactly what the missing layer requires: deep systems work, compiler-level thinking, distributed-systems discipline, and the patience to build something that will not look impressive until the moment it becomes indispensable.

The frontier labs are equally misaligned. Anthropic, OpenAI, and Google DeepMind are racing to push the boundary of intelligence, not the boundary of continuity. Their revenue comes from tokens, not architecture. Their roadmaps are shaped by capability benchmarks, not system-level stability. The missing layer sits in the blind spot between two worlds: too deep for startups, too unglamorous for labs, too foundational for product teams, too long-term for investors.

This is why the ecosystem keeps producing the same pattern. A new agent framework gains attention, collapses under real use, and is replaced by the next one. A new coding assistant dazzles in demos, fails in production, and quietly pivots. None of these failures are surprising. They are the predictable output of an ecosystem trying to build cathedrals on sand.

The reason the layer does not exist is not that no one sees the need. It is that the people who understand the need are not the ones incentivized to build it, and the people incentivized to build are not the ones who understand the need.

The Strategic Opportunity

Every technological shift reaches a moment when capability stops being the bottleneck and architecture becomes the constraint. Cloud reached that moment when AWS realized that selling compute was less valuable than selling the abstraction of compute. Mobile reached it when Apple realized the App Store was more powerful than the iPhone. Social reached it when Facebook realized the social graph was more valuable than the feed. AI is approaching the same inflection now, but the industry has not recognized it. Everyone is staring at the models, bigger, faster, more capable, while the real leverage is accumulating in the layer beneath them.

The company that builds the architectural memory layer will not win because it has the best model. It will win because it controls the substrate that every other AI system depends on. The memory layer is the operating system of AI engineering. Once it exists, the models above it become interchangeable. The agents become interchangeable. The IDEs and orchestration layers and workflow tools all become interchangeable. The only thing that is not interchangeable is the layer that makes continuity possible.

This is the same pattern that has repeated across every major computing wave. AWS controls cloud because it controls the abstraction of compute. Stripe controls payments because it controls the primitive of money movement. Nvidia controls AI training because it controls the hardware that everything else depends on. The architectural memory layer is that primitive for AI engineering: the thing that sits beneath everything, accumulates power because building without it becomes impossible, and becomes the default because the alternative is building on sand.

I know what this layer must be not because I theorized about it, but because the system forced it into view. Every time the AI forgot an invariant, I had to build a mechanism to enforce it. Every time it drifted from the architecture, I had to build a constraint to prevent it. Every time it broke a contract, I had to build a validator to catch it. The missing layer is not an idea. It is the accumulation of every mechanism I was forced to build to keep the system coherent as it grew beyond what a prediction engine can maintain alone.

The industry has spent two years trying to stretch prediction into places it was never meant to go: trying to turn a statistical engine into an engineer, a context window into continuity, a swarm of agents into architecture. But systems do not care about ambition. They care about physics. And the physics of the current AI stack are clear: without persistent, enforceable memory of the world it is modifying, no model, no matter how capable, can build or maintain a system of any meaningful scale.

Every major platform shift in computing has followed the same pattern. First capability. Then chaos. Then the realization that capability is not enough. Then the emergence of the layer that makes capability usable, stable, and durable. AI is standing at that threshold now. The models are extraordinary. The systems built on top of them are fragile. The gap between the two is not intelligence. It is the absence of a substrate that remembers.

The next era of software will not be defined by who trains the largest model. It will be defined by who builds the layer that gives those models a world to stand on.

When it arrives, it will not feel like a breakthrough. It will feel like gravity: the thing that was always supposed to be there.

What This Means For the People Building on the Current Stack

For engineering leaders and CTOs: The drift you are experiencing in your AI-assisted workflows is not a prompting problem. It is a substrate problem. Every mitigation you build, better context management, stricter review processes, more constrained agent scopes, is a patch on a missing foundation. The question to ask is not “how do we prompt more carefully” but “at what scale does our current approach collapse, and are we building toward that scale.” If you are, the architectural memory layer is not a future concern. It is a present one.

For founders building on AI: The agent frameworks and orchestration layers that feel like infrastructure today are not infrastructure. They are capability wrappers: useful at small scale, brittle at production scale, replaceable when the memory layer arrives. The defensible position is not the wrapper. It is the domain knowledge, the compliance requirements, the data relationships, and the architectural decisions that the memory layer will need to encode. Build those now, before the substrate exists, and you will have the content that makes the substrate valuable.

For investors: The current wave of AI tooling investment is largely funding scaffolding, not foundations. The companies that will define the next decade are the ones building the layer beneath the scaffolding, the ones whose product becomes more valuable as every other AI tool depends on it. The signal to look for is not capability demos. It is production deployments at scale, with failure modes documented, with architectural constraints already encoded, with founders who have lived through the physics of large systems and understand exactly which layer is missing.

For the frontier labs: The architectural memory layer is not a feature you can bolt onto a model. It is a separate system with separate requirements: persistence, enforceability, versioning, schema management, constraint validation. The labs that treat it as a model capability will build the wrong thing. The labs that treat it as infrastructure will build the right thing too slowly. The most likely outcome is that it is built by people outside the labs, from the production floor up, by teams who have already felt the substrate collapse under them and understand what the replacement must be.

The missing layer is not a matter of speculation. It is a matter of time, and of who builds it first.

The structural takeaway: The AI ecosystem is built on a foundation of prediction, not architecture. LLMs generate structure but cannot preserve it: they have no persistent memory of the systems they modify, no mechanism to enforce invariants across sessions, no concept of architectural continuity. Cognition’s Devin showed 13.86% on SWE-bench. LangChain, AutoGPT, and CrewAI have each collapsed under production load for the same structural reason. The missing layer is not a model capability. It is a persistent, enforceable architectural memory substrate that stores decisions, validates constraints, and gives AI the ability to modify systems rather than just generate fragments of them. Every platform shift in computing history required this layer before it could scale from demos to infrastructure. AI is at that threshold now. The company that builds this layer will not have the best model. It will have the substrate that every other model depends on.

Have you tried spec driven development with Kiro?

As a founder, this resonates. The pain isn’t capability. It’s continuity. Until we have a real architectural memory layer, everything else is temporary scaffolding.